Dan Wilson

Data Scientist bei DS4C. Abschlüsse in Physik und Neurowissenschaften, Fokus auf Machine Learning und erklärbare KI.

Dan Wilson

March 24, 2026

Not long after the Brexit referendum in 2016, Dan left Sheffield for Freiburg. After several years studying and working at the University of Freiburg, he joined DataStrategies4Change in April 2025. This is a reflection on his first year working with data in the real world:

I spent years working on neural networks in academia. The most impactful thing I’ve built since? A decision tree.

I cut my teeth in machine-learning and AI in academia, designing and tuning complex neural networks - the kind of work where success means squeezing out a few extra percentage points on benchmark datasets1.

I’ve always enjoyed solving technical problems - something that took me from physics into neuroscience, and later into computer science research. Then around a year ago, I joined DataStrategies4Change as a Data Scientist & Analyst.

I didn’t expect NGOs to be using deep learning — they lack the resources and expertise. And even classical machine learning comes with real concerns around bias and misuse. So it’s understandable that many organisations are cautious.

But I went in assuming that at least the basics would already be in place — classical machine learning, statistical modelling and so on. In reality, resources are stretched far thinner than I expected, and even these methods are out of reach for most NGOs.

But the bigger surprise wasn’t what was missing. It was what actually mattered instead.

Impact hasn’t come from next-gen models. It came from making data usable, making results understandable, and building systems people could actually trust.

Academia obsesses over the latest model; NGOs don’t have that luxury. A decades-old algorithm like the decision tree may be unimpressive in one - and invaluable in the other.

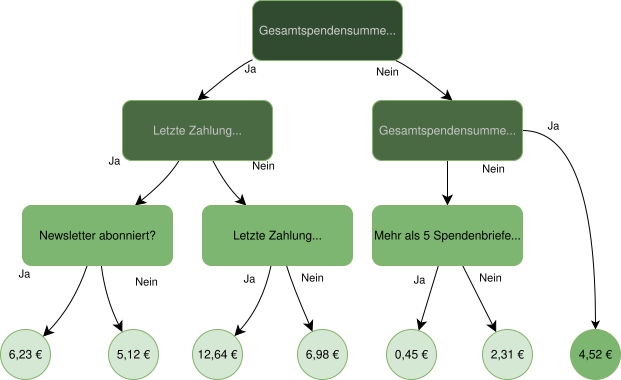

If an organisation is analysing donors using static, hand-written rules from years ago, a decision tree - a model that learns a set of yes/no questions from real data, like an automated flowchart - can make a real difference. Not because it’s complex, but because it’s data-driven.

It looks at all the data. It learns from real outcomes. It updates after every campaign.

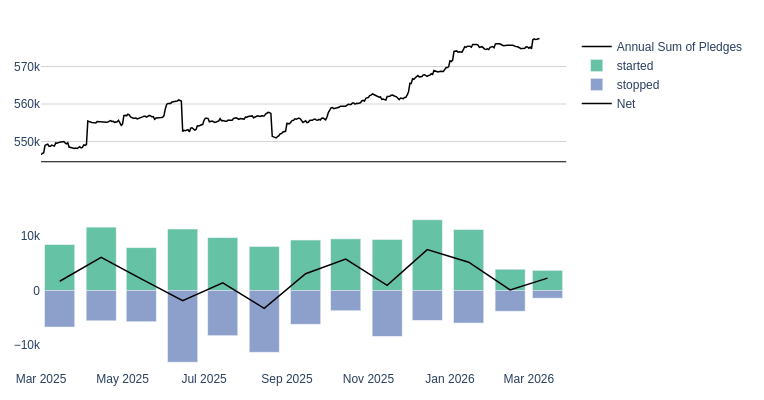

In one project we worked on, our models sent nearly 20% fewer letters - while increasing net surplus by 6.3% (read more about this here). During my year at DS4C, I was able to improve this model further, helping this NGO which has been running all its mailing selections with our algorithms for several years now.

It’s the 80:20 rule2 in action: a relatively simple model that delivers most of the impact. Pushing beyond that - towards marginal gains - can require disproportionate effort.

And that was my biggest mindset shift: that we can make the biggest difference, not by building an incredibly complex or powerful model, but by building something that’s better than what came before - and works within the systems and resources available.

One organisation we worked with had email addresses for every contact — but didn’t see them as useful data.

A bit of string parsing later, we had useful patterns: like whether someone’s email came from a university or a company. Nothing fancy. But it improved the model.

This happens all the time. NGOs often don’t track engagement systematically, store useful data inconsistently, or only measure half the outcome — for example donations or donors, but not both together. Sometimes they don’t even know how much has been pledged to them in recurring donations for the coming year..

It’s not a capability problem. It’s a data culture problem.

There’s usually far more signal in their systems than anyone realises.

Real world data can be… messy in very human ways. Not because people are careless - but because systems evolve over time, teams change, and documentation rarely keeps up.

This leads to:

The only way through is talking to the people behind the data - the ones who actually know what it means.

I expected explainability to matter. Just not like this.

I thought it would be about and model refinement.

And it is — to a point. But more than that, it’s about trust. Because refining a model is pointless if people don’t trust it — or don’t trust you with their data in the first place.

In practice, it’s simpler than I expected. Organisations want to trace predictions, sense-check outcomes, and align results with domain knowledge. What builds trust isn’t accuracy scores — it’s transparency.

So we almost always use glassbox models: models whose logic you can fully inspect and follow.

And that trust doesn’t stop at the model. Many organisations are — rightly — cautious about AI systems, especially given how often they’re associated with bias, opacity, or misuse. But at the same time, they often rely heavily on external platforms and cloud providers, sometimes storing sensitive data outside their own jurisdiction.

So the caution is there. It’s just not always applied consistently. That’s not a criticism — it reflects how difficult it is to judge where the real risks are. It’s also why we’re deliberate about how we handle data: computation runs on our own infrastructure, under our control, in our office — not on external cloud services.

But it does highlight a broader opportunity: to build systems that are not just effective, but understandable — and to have more grounded conversations about where the real risks and benefits actually lie.

What people think is easy vs hard in AI is often flipped.

Finding articles where a politician promotes an idea without naming it — that sounds hard. It isn’t: the right RSS feed, semantic search and a good prompt, and it works well.

On the other hand: “Just compare this legal document to others” sounds simple — until you realise it references dozens of external laws that aren’t included.

I took this role because I enjoy working with data and solving problems - and it’s especially rewarding to apply those skills to causes I genuinely believe in.

These organisations are doing important work with limited resources. Even small improvements in how they use data can free up time, money, and attention for what actually matters.

The NGO sector doesn’t need bleeding-edge AI.

It needs people willing to meet it where it is - and help it take the next step.